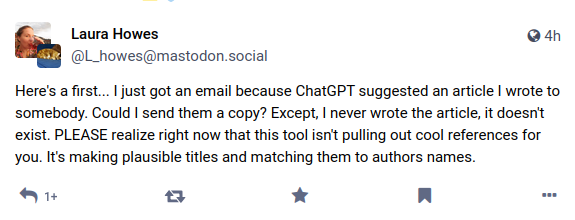

New technology opens new and exciting dimensions to what is possible. Thus far, AI is not ‘thinking’ for itself but depends on human input and requests. Humans, on the other hand, come in all types, not all of them benign

while ML (Machine Learning ) technology, enabling machines to search through billions of possibilities, clearly provides powerful augmentation of human capabilities – (power steering for the mind, as it were), whether this is good news for humanity depends on whose minds it is augmenting

Policing the virtual world

"That which is illegal and harmful in the physical world should be illegal in the virtual synthetic world as well.”

national cyber-crime does not exist - almost all of the cases have an international dimension

Given the unlimited scope of the virtual world, policing it in any way will be a great challenge. At some point, total freedom of speech may need to be amended.

A good start would be to prevail upon social media program controllers, such as FaceBook, Twitter, TikTok etc. to monitor and edit their content. This may require legislation as ‘persuasion’ has not worked well so far.

As technology, both the good and the bad, is now accelerating exponentially, human control needs to bye proactive in an uncharted territory devoid of ethic.

And, just in case you were thinking “I don’t play online games” or “I don’t use the interweb”. You do not need to. Technology will reach you in one way or another - post, taxes, mobiles, medical care…